Terms of Use | Privacy Notice | Data Privacy Framework | Cookie Notice | DMCA | Whistleblowing |

© Altair Engineering Inc. All Rights Reserved.

Terms of Use | Privacy Notice | Data Privacy Framework | Cookie Notice | DMCA | Whistleblowing |

© Altair Engineering Inc. All Rights Reserved.

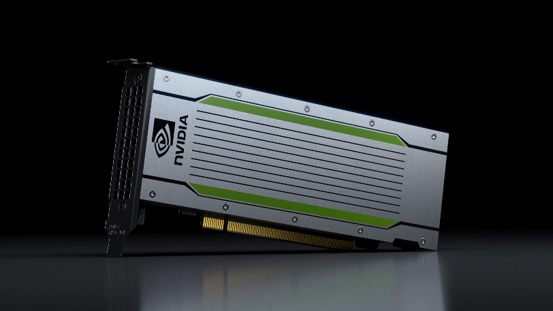

The new PNY EGX system allows HPC and AI at the edge. It comes with 4 x NVIDIA Tesla T4, the world’s most efficient accelerator for all AI inference workloads. NVIDIA Tesla T4 is a part of the NVIDIA AI Inference Platform that supports all AI frameworks and provides comprehensive tooling and integrations to drastically simplify the development and deployment of advanced AI.

Developers can unleash the power of Turing Tensor Cores directly through NVIDIA TensorRT, software libraries and integrations with all AI frameworks. These tools let developers target optimal precision for different AI applications, achieving dramatic performance gains without compromising accuracy of results. Libraries like cuDNN, cuSPARSE, CUTLASS, and DeepStream accelerate key neural network functions and use cases, like video transcoding. Workflow integrations with all AI frameworks freely available from NVIDIA GPU Cloud containers enable developers to transparently harness the innovations in GPU computing for endto- end AI workflows, from training neural networks to running inference in production applications. The PNY EGX system comes with full NGC support, which makes it the perfect platform for accelerating various workloads faster using NGC containers.